Measure What Matters. Prove What Works.

NASDE stands for Noesis Agentic Software Development Evals — a CLI toolkit for evaluating AI coding agents beyond pass/fail. Built as a wrapper layer over Harbor (sandboxed execution) and Opik (observability & tracking), NASDE adds an agentic code review stage — a separate "nasty" reviewer agent navigates the produced codebase and scores it across custom quality dimensions.

The NASDE Pipeline

Standard evals give you pass/fail. NASDE gives you a multi-dimensional quality map.

Task Definition

+ assessment criteria + scoring dimensions

Harbor: Agent Solves Task

in sandbox (Docker / cloud)

test.sh: Functional Tests

Binary Reward

0 or 1

NASDE Reviewer Agent

navigates the codebase

Multi-dimensional Scores

N dimensions × 0-25 pts

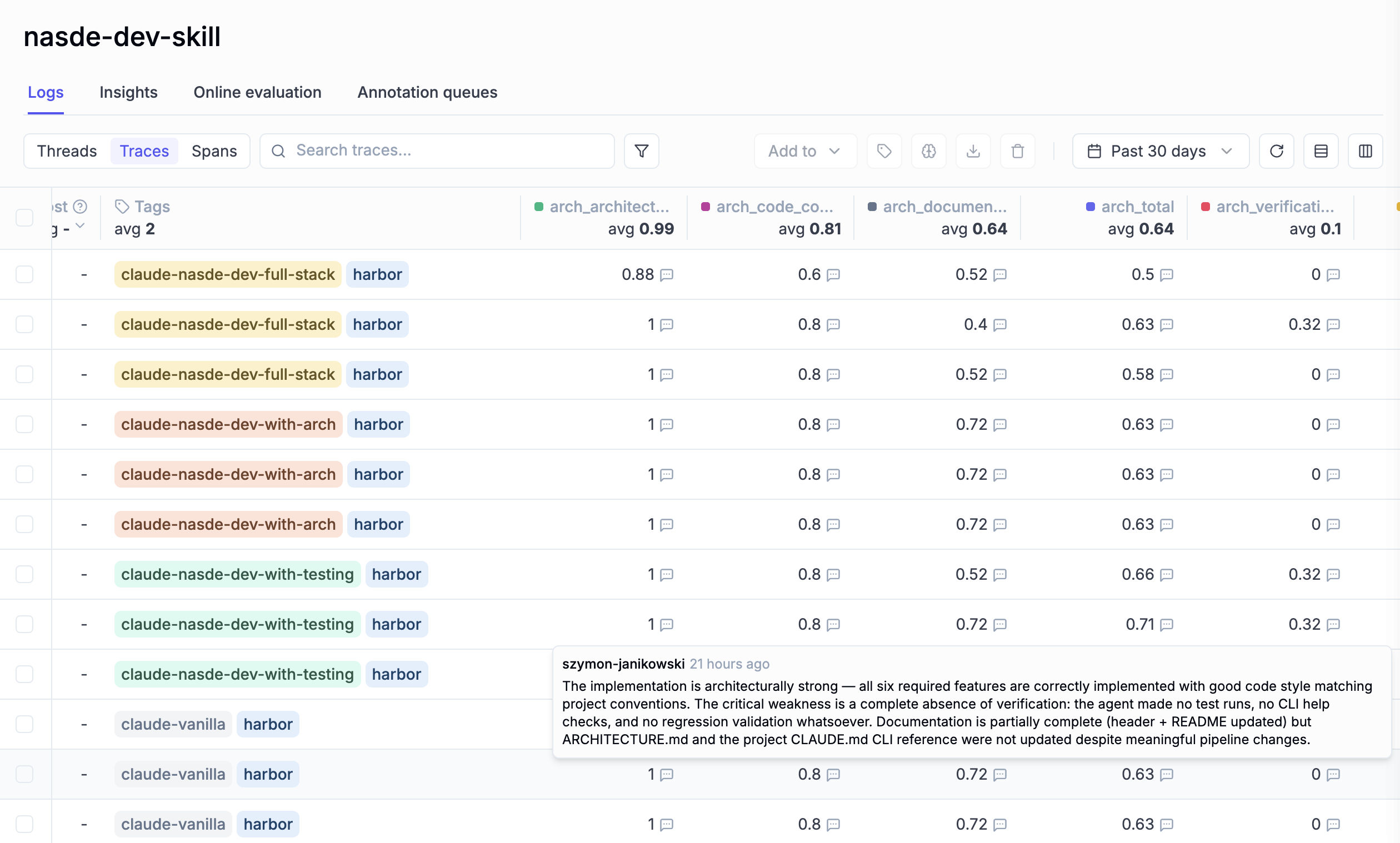

Every Dimension, Beautifully Tracked

Scores are logged to Opik for experiment tracking, comparison, and visualization. Each dimension is a separate feedback score — easy to filter, compare, and trend over time.

Opik dashboard showing dimension scores across different agent configurations and variants.

Built for the CLI Era

NASDE is a single nasde command on your PATH. No web dashboards to configure, no complex setup. Just a CLI that agents and humans both use naturally.

One Command to Run

nasde run --variant vanilla -C my-benchmark — that's it. Harbor, sandbox, scoring, and Opik logging all handled automatically.

Ships with Built-in Skills

Built-in skills guide you through creating benchmarks, mining git history for tasks, curating public repos, and running evaluations. Describe what you want — the skills take it from there.

Agent-Friendly by Design

CLI + skills means AI agents can operate NASDE as naturally as a human. Your coding agent can scaffold benchmarks, run evaluations, and analyze results — all through the same interface.

Zero Infrastructure Overhead

Install with uv tool install . — Harbor, Opik, and Claude Code SDK are bundled. One install, everything on PATH, ready to go.

Beyond Pass/Fail

Traditional evals check if code compiles. NASDE scores how well it's engineered.

Real-World Tasks

Extract evaluation tasks from your git history with benchmark-from-history, or curate diverse public repos with benchmark-from-public-repos. Real work from real codebases.

Multi-Dimensional Scoring

Custom scoring dimensions defined in assessment_dimensions.json. Each task gets a detailed rubric (assessment_criteria.md). The reviewer agent freely navigates the workspace and scores against your criteria.

Agent-Agnostic Comparison

Compare Claude Code vs Codex, vanilla vs custom skills, different tool configurations. NASDE supports any agent via Harbor — isolated Docker or cloud sandbox per trial.

Configuration Testing, Not Just Skill Testing

Built-in evals test if a skill triggers correctly. NASDE tests whether your complete setup — skills, CLAUDE.md, MCP servers — produces better code. The difference: activation vs outcome.

How It Works

Define Tasks & Criteria

Scaffold with nasde init, define assessment dimensions (e.g. domain modeling, error handling, code quality), and write per-task rubrics. Or auto-generate from git history.

Run in Sandboxed Environments

Harbor executes agents in isolated Docker or cloud sandboxes (Daytona, Modal, E2B). Then NASDE's reviewer agent — powered by Claude Code SDK — navigates the codebase and scores it.

Track & Compare in Opik

Every run is logged with dimension scores, traces, and rewards. Compare variants, track progress over time, and feed insights back into your agent configuration.

Ready to measure what matters?

NASDE is open-source and ready to use. Clone the repo, scaffold your first benchmark, and start measuring what actually matters in your AI development setup.